Calling for safegaurds in fabricated research papers generated in ChatGPT's output

As artificial intelligence (AI) becomes more prevalent in fields ranging from art and music to programming and driving, companies and researchers are increasingly turning to this technology as a solution to a variety of problems. However, some have questioned whether certain applications of AI are more marketing gimmick than practical solution, with some high-profile examples like Tesla being cited as examples.

I, like many others, watched and read the articles that were published about the OpenAI project ChatGPT after it was released to the public in November. My opinion on ChatGPT is mixed. On one hand, I find it helpful for simple tasks. However, as other papers have noted, the more complex the question, the more likely ChatGPT is to fail. I don't have a problem with an AI failing in and of itself; OpenAI even notes that ChatGPT is currently in a free research preview. My concern is with "silent failing," or when ChatGPT provides an answer that it believes is completely correct but is actually incorrect. An opinion piece by Rupert Goodwins highlights the issues with this type of confident incorrectness. While most incorrect answers might be harmless, there could be some that are outright malignant and have significant consequences.

As a novice researcher, I have come to understand the importance of including a "related works" section in a research paper. This section is essential for properly crediting prior research and acknowledging the impact of other works on your own research. It is also an important way to demonstrate to publishers that your paper is novel and has value. A key part of this process is building an annotated bibliography, which requires reading a large number of papers from influential publishers such as ACM's Digital Library and IEEE Xplore.

This process can be extensive and time-consuming, and it is often necessary to do it twice - at the beginning of a new research project to ensure that your idea is novel, and at the end to make sure that no one else has published on the same topic.

ChatGPT is an alluring tool, the ability to quickly ask research related questions and highlight the most impactful papers based on reach/citations/etc. The ability to simply ask if there are prior research papers posted on a particular topic. While current researchers might rightfully scoff at the idea, I would contend that a more detailed and cross-platform search engine in comparison to the results generated from Google scholar would be a welcomed addition to a researchers toolkit.

As such, I was curious, what happens if I ask ChatGPT for research papers in my field as a starting point for a possible annotated bibliography. My query: “do you know of any research papers that use AI in automated assessment tools to teach novice students to code” yielded some seemingly normal results.

As any inquisitive researcher should do, I asked for a full citation to help with finding the paper in a digital library.

This immediately raised some questions. While ChatGPT was correct that ICSE 18 takes place in Gothenburg Sweden, it was the 40th international Conference. The 39th International Conference instead took place a year prior ICSE 17 in Buenos Aires Argentina.

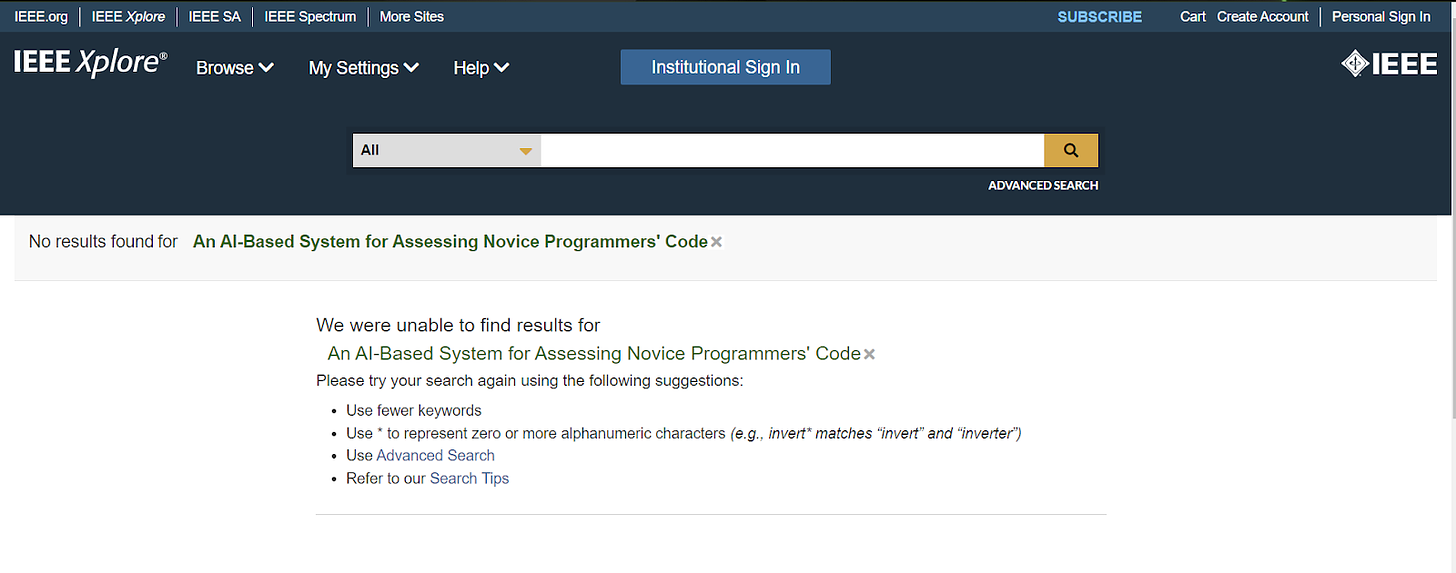

With the citation incorrect, I had only the paper title to go off when searching through databases. Given I had the exact title and authors of the paper, I assumed it would be trivial to find a PDF version of this paper, how wrong I was.

Despite searching through multiple sources including ACM Digital Library, IEEE Xplore, and Google Scholar, I have been unable to locate the research paper through any available methods. ChatGPT, which is currently disconnected from the internet, is unable to provide a direct link to the paper or refer to any resources that might contain it.

It's possible that I made a mistake in my search, and will have to issue a retraction on my first published article. Alternatively, it seems that ChatGPT, the AI in question, has either fabricated a paper, authors, and citation outright, or simply misquoted a preexisting work. This is a concerning development, and it raises serious questions about the reliability and credibility of artificial intelligence in the field of research.

As an individual without the resources to fully investigate the origins of this flawed result, I hope that by bringing attention to the issue through this article, mainstream publications will take up the cause and help uncover the root cause of the problem. Was it a result of incorrect training data, or is there a more sinister explanation, such as the creation of a completely fabricated paper?

As I mentioned at the beginning of this article, my opinion on ChatGPT, and AI more generally, is mixed. While it's true that ChatGPT has proven to be a useful tool for simple questions and helpful in debugging scripts, we cannot blindly trust it or any other AI as the ultimate authority on truth. Cathy O'Neil's warnings about the dangers of blindly trusting big data still weigh heavily on my mind as we increasingly rely on AI in our daily lives. While I believe AI has the potential to bring benefits to a plethora of fields, the question of who is responsible for ensuring its accuracy remains. Is it up to the creators of these systems, like OpenAI, to ensure their accuracy, or is it the responsibility of the users to understand that an AI's output should not be treated as gospel?